My Company Traitwell's Future of Genomics Series

The first of a series of posts about genomics and its future. We begin by examining the past.

I’m traveling this past week so forgive me for not writing as much. A few odds and ends will be coming out over the weekend and into the coming week. I oftentimes get asked just what’s going on with Traitwell, what are you guys up to, that sort of thing and hopefully this post can help explain it.

My colleague Gavan Tredoux has laid out a very compelling vision for where we are going with Traitwell, the genetics company I cofounded and where I serve as CEO.

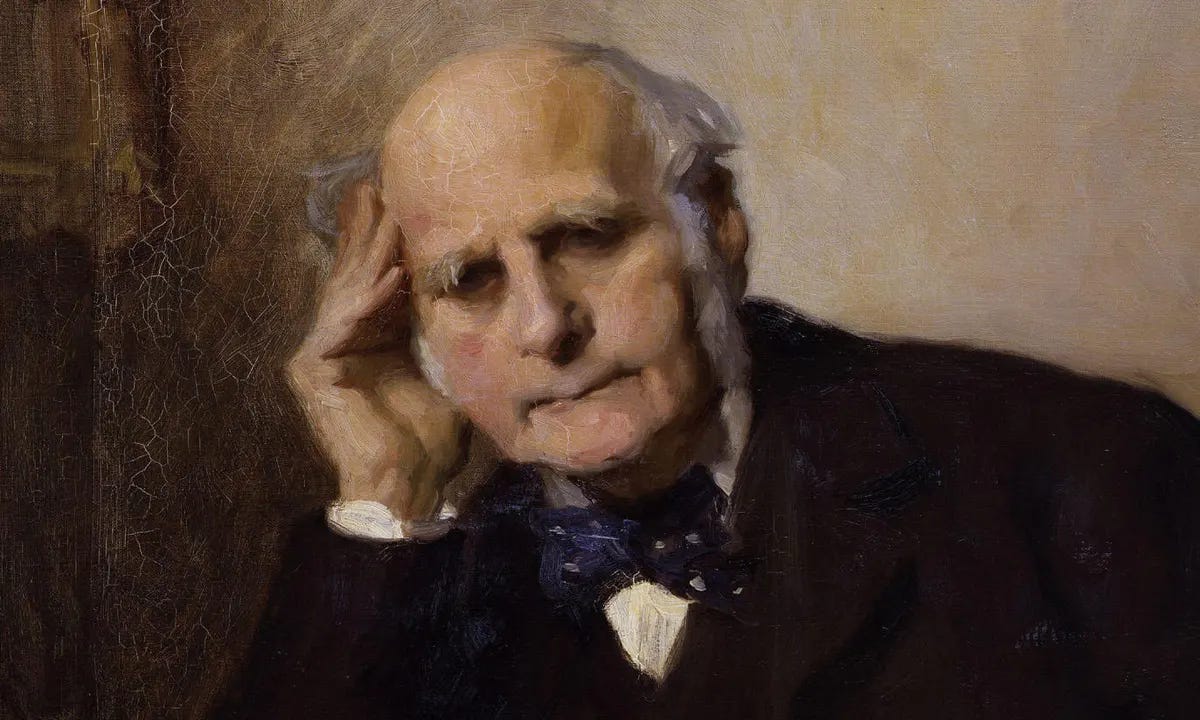

You should follow Gavan’s work at his website Galton.org. In addition to his duties at Traitwell, Gavan has written a number of books. His intellectual interests as recounted on twitter are “Data Science & Statistics, Sir Francis Galton, Sir Richard Francis Burton, JBS Haldane, Behavior Genetics.”

Born in South Africa and currently residing in St. Louis, it’s a great joy to work with him and the rest of the team at Traitwell. Gavan is, like me, something of an autodidact and a polymath.

Now here I am showing my biases: I’m generally a fan of polymathic figures who I regard as essential for most of the progress we’ve experienced. Our founders were polymaths and yet our government is run by obsessive specialists. I think sometimes it shows.

I agree with science fiction writer (and Navy man) Robert Heinlein:

A human being should be able to change a diaper, plan an invasion, butcher a hog, conn a ship, design a building, write a sonnet, balance accounts, build a wall, set a bone, comfort the dying, take orders, give orders, cooperate, act alone, solve equations, analyse a new problem, pitch manure, program a computer, cook a tasty meal, fight efficiently, die gallantly. Specialization is for insects.

And insects need to be quashed. No, I don’t really trust hyper specialized people. In fact I’m somewhat of the view that many of the problems we face today come from people who have the right credentials but the wrong approach to learning or processing the world. Whenever I find a genuine polymath I try to work together.

The posts were a sort of shared work for Traitwell and they represent the sort of thinking that we share.

Where genomics is now. Where it is going. What is possible.

First, some background is necessary, to recapitulate concepts the rest of this discussion depends on. Many of these concepts may already be familiar, others may not be. Inevitably some simplification is necessary, to reach a broader audience. On the other hand, a great deal of additional explication enables those less steeped in this area to profit from the discussion.

Genes are ubiquitous. They affect just about every human and animal quality, physical and non-physical. For convenience, call all of these ‘traits’. When we measure the heritability of any trait from observed data, it is substantial. By heritability we mean the extent to which differences in a trait are the result of differences in genes. We are interested here in variation, so to speak, not in normal or average values. It is possible for something to be completely under genetic control without seeming heritable, since there might be no natural variation in it. However if something is heritable it must be under a corresponding degree of genetic control. Heritability is usually expressed as a percentage, ranging from none (0) to total (100), e.g. 73%, Since the future of genomics will be driven by the all-pervasiveness nature of genetic effects, some more explanation is called for.

Heritabilities are estimated from pedigree studies, first initiated in the 19th century. Here we do not need to know what the operative genes are, at the DNA level, we can still infer that they must exist. Among humans, these pedigree studies include twins—fraternal or identical, raised together or raised apart—siblings and more distant relatives, and adoption studies of biologically unrelated siblings. The underlying contrasts include genes being held constant, and non-genetic effects being held constant. The data can all be combined into one large ‘structural equation model’ to provide an estimate of heritability which represents the complete weight of known evidence. For many physical traits, heritability approaches 1, so that differences in the trait are almost wholly the result of genetic differences. For behavioural traits like intelligence it is as high as 0.7. For personality and just about every other behavioural trait is it approximately 0.5, sometime less, sometimes more.

If anything, currently-known heritabilities are underestimates, since we only have natural experiments (differences observed in the real world) available to make these inferences. If we had experimental control we could introduce variation, of an artificial kind if necessary, as desired and see what the results are, thereby gaining a much more accurate idea of genetic influence (though perhaps not in real-world conditions). For human subjects this is usually not possible due to ethical constraints—although those may fluctuate over time.

What is not heritable is strictly speaking non-genetic. That includes all the influences commonly understood as the ‘environment’, a very loose term for nurture, but which also includes many sources of error, including measurement error, construct invalidity of the trait measures (which may only obliquely get at the underlying trait), and random developmental error. It is important not to simply conflate the popular sense of ‘environment’ here with non-genetic influences. Those are effects which do not trace to the genes of the individual subjects considered, but they do include the genes of other people. Environments also correlate with genes, since those elicit responses; they are constructed actively by genes, since humans and animals are niche developers and not helpless victims like potted plants in greenhouses.

We can only reason about the world that we experience, as a large natural experiment. It is certainly possible that other worlds exist in which things are different. It is not even hard to imagine such a world, or to make it happen. Take intelligence, highly heritable as we have mentioned at about 70%. If we changed the world into a nightmare in which we stunned all newborns over the head with a sledgehammer at birth, everyone would be brain damaged, and with enough zeal in administering blows heritability of intelligence might drop to zero. But that is not the world that we live in. Nevertheless, the range of variation within the world that we actually experience suggests how much non-genetic influences would have to change (to stretch beyond that range).

To see just how pervasive heritability is, an example is useful. Humans have accidents. They cut their thumbs off sawing wood, they crash their cars, they fall into holes in the sidewalk while texting on their phones, they step on rakes on the lawn while lollygagging at their attractive neighbours. The number of lifetime accidents is a trait like any other, it can be measured and its heritability can therefore be computed from pedigree studies. The data shows that it is substantially heritable, despite the initial impression that these must be random events. Twins will have not-dissimilar rates of accidents over their lifespan. Clearly what underlies this trait is something like risk-taking and lifestyle choice, and genes influence both of those.

Knowing that traits have a genetic basis is some distance from knowing which genes, precisely, are responsible. Indeed, we knew about heritability, and even had preliminary estimates for it when genes were still just a vague and wholly indefinite hypothesis, latent and never observed directly.

Some idea could be gained from the serious (and therefore more obvious) conditions classed together as ‘Mendelian Disorders’—extra fingers and toes are a good example. There are very many of these conditions, usually caused by a single mutant gene (or at most a few of them), but each one is very rare in real-world populations because they are purged by natural selection. Recall that humans are diploid, inheriting two copies of each gene, one from each parent, and things are complicated somewhat by that. Thus Mendelian disorders may be dominant (offspring always get them even with just one copy of the mutant gene) or recessive (offspring only get them if they get two copies of the mutant gene). For most of the 20th century, medical genetics occupied itself with these conditions, which are comparatively easy to study, as we will see.

After the Human Genome Project (and similar projects for other organisms) mapped most of the exact positions of genes, we only knew how ignorant we remained. Learning the address and position of something tells you next to nothing (most of the time) about what it actually does. Still, some genes were known to be implicated in some structures and processes, and ‘candidate gene’ studies used samples in which those genes were measured, along with traits, to look for statistical associations between genes and traits. These are now known as candidate gene studies. They were disappointing overall. When associations were first found, with much fanfare, they often failed to replicate later. But they yielded a few good results for simpler conditions and are not wholly obsolete now.

A real break-though came in 2006 with the advent of Genome-wide Association Studies (GWAS). This is based on an insight that dates right back to the inventor of the heritability statistic, R.A. Fisher. To resolve the impasse created by the failure of candidate gene studies, researcher had to dig back to theoretical fundamentals. The model that Fisher originally developed for genes treated them as independent additive effects, each of tiny size. He thought that most traits are polygenic, with many genes contributing to the outcome, as opposed to the more modest number of single-gene traits like Mendelian disorders. It was a deliberate simplification but it turned out to be extremely useful in practice as well as in theory. The GWAS approach fits separate statistical models to a very large number of genes, to predict a trait—there are far too many genes to model them jointly. Unlike candidate gene studies, the method is hypothesis-free and does not pre-judge which gene will prove effective. This allows estimation of the (usually tiny) effects that each gene has independently on the outcome, together with the statistical significance of the association and other facts. By trawling through large gene sets and samples of enormous numbers of individuals in this way, many associations are found.

First, some loose usage of ‘gene’ here must be clarified. Strictly speaking genes are regions of the genome which code for proteins. Most of the genome does not directly code for proteins, but is still important in other ways, for instance by regulating actions of protein-coding genes, with consequences downstream which are still poorly understood. Below we will often refer to Single Nucleotide Polymorphisms (SNPs) instead. These are single positions within the genome. Again, all genes are sequences of these SNPs, but not all SNPs are directly involved in genes. These single positions can take alternative values, which are also referred to as ‘alleles’ (as for gene variants). When we associate a SNP variant with an outcome, we are glossing over the fact that we don’t necessarily know how that happens physiologically (but that is also true for many genes).

GWAS permits at least two kinds of inference about SNPs and traits: causal and predictive. Causal inference is important when searching for interventions, such as drugs to target specific conditions. If it is highly likely that a specific SNP causes, say, a disorder, then the physiological effects of that SNP, once those are determined, can provide targets for treating the condition through drugs. In some cases, direct genome editing is even possible in living fully developed subjects, as we will see later. However it is easy to find associations involving SNPs that are not causal because positions that are physically close together in the genome are linked together: if the value at one position changes, the other tends to change too: they travel together. The closer they are, the more likely they are to be linked (cumbersomely known as ‘linkage disequilibrium’ because they do not assort independently after sexual mating). Causal inference also presupposes a high level of confidence that an association found is not a spurious by-product of the very large number of SNPs tested. By controlling this false discovery rate using statistical means—say by requiring stringent levels of ‘statistical significance’—this possibility can be reduced and confidence raised.

Predictive inference doesn’t care about causality per se, and only attempts to explain the outcome. If SNPs are found that do this, and are merely associated—along for the ride, so to speak—with the hidden SNPs actually doing the work, which may not have been specifically identified, that is fine. The SNPs that have been found are acting as proxies, and still enable explanation of the outcome. For predictive purposes, the numerous SNPs involved in a GWAS can be combined into a polygenic score (PGS). In the simplest case, such scores can be created, for any particular person (or animal), by adding up the SNP effect sizes found by the models, given the variants actually carried by that individual.1 This composite score can then be used as a predictor for the outcome, possibly with other predictors in a joint model.

There are much more complicated methods in use for constructing polygenic scores, depending on the trait one wishes to predict. One may choose to filter the set of SNPs used, to only consider those less likely to be spurious—say by strictly controlling the false discovery rate conservatively demanding stringent statistical significance for each one included. Usually this will drastically reduce the set of SNPs which go into the score. In practice it has been found that this typically does not improve predictive power, though it might. (Though this may surprise some, it is common knowledge in the machine learning field.) Often a PGS can be incorporated into a more complicated model for predicting an outcome, as an additional element, and re-weighted in the presence of other predictors. There are many possibilities, which will not concern us further here, beyond noting that the term is generic: there are many different kinds of polygenic scores, though they all do roughly the same thing.

We have spoken of SNP values above without specifying how those are obtained. Differences in the methods used to get them matter a great deal for the discussion that follows. First note that the ‘gold standard’ for sequencing genomes is so-called Sanger Sequencing. It is the most complete and accurate method known. However this remains very expensive and time-consuming per individual, and therefore many other technologies have been developed, but so far they all require compromises.

Most commonly, microarrays (optically-read ‘chips’ which are ‘stained’ by DNA) are used to obtain a large sample of SNP values for a particular individual. These are cost effective for the mass consumer market, and target SNP positions with variants which are not vanishingly rare in the population, and are already known or suspected to be of interest. They are a small sample of all SNPs and do not cover complicated genomic differences between individuals, where the structure has been altered by insertions, deletions, repeats, pseudo-genes and other complications. These variations are known to have important consequences for many traits. To detect many more SNPs, and cope (to a limited extent) with structural variation, more expensive ‘next-generation’ whole-genome sequencing is available. This is far less common in the consumer market. Recently this has become more affordable, much more so than Sanger sequencing, enabling its use in principle. Uptake so far has been modest but is expanding. Intermediate between chip sequencing and whole-genome–in both information yielded and cost—is ‘whole-exome’ sequencing, which attempts to sequence all genes, i.e. the SNP positions known to be involved in protein production.

Clinical use of genomics however has more rigorous demands, depending on the specific purpose of the sequencing. Inaccurate sequencing can have life-threatening consequences when doing, say, organ transplantation. Currently affordable ‘whole genome sequencing’ (a misnomer) is a short-readtechnology. To reduce error rates, the technology does not look very far ahead while scanning the genome. This limits its ability to deal with more complicated structural variation within the genome, as glossed over above. Correctly handling that requires much longer look-ahead while scanning the genome, and is collectively referred to as long-read sequencing. There are several different methods for doing this, while still moderating error rates, in development. Advanced clinical use currently relies on custom scanning of specific genome regions, from fresh samples, for the application at hand (say, organ transplants). This is very expensive.

There is an important goal here, not yet realized. It will drive future development of genomics. If the (structurally) complete genome sequence of an individual can be scanned, then it can be repeatedly mined for information. To the extent that this is affordable, value is unlocked, latencies are reduced, and a mass market is enabled. Genomics has not reached this point yet, only approximated it very imperfectly. It will inexorably drive toward that point in the future, unlocking much greater value.

Although hypothesis-free GWA studies of polygenic traits have found many associations for many traits, the amount of heritability that they explain using specific SNPs has typically been modest, for any one trait, to date. This is over 50% for height, based on more than 5 million people, but the highest behavioural trait is intelligence, at about 15%. Compare that to the pedigree estimate of intelligence heritability at 70%. The heritabilities obtained from pedigree studies set an approximate upper limit of what we can expect to explain. In part this is because such studies have used microarray data (mass sequencing requires affordable methods per subject). These microarrays only sample SNPs with less uncommon variants. Much variation must occur at SNPs with very rare variants. Each of these will affect fewer individuals but cumulatively will explain a lot of population variation. Extending studies to use whole-exome or, even better, short-read whole-genome sequencing will improve matters. This is already underway. Using long-read sequencing methods, or equivalents, will detect still more variation in more complex structure. Larger samples will also improve explanatory power. As these sample sizes have expanded in the past, to encompass many millions of individuals, a clear increase in explanatory power has resulted.

However, increasing sample size will not be enough alone. A trait like height is easy to measure, but other traits are often much more complicated. Measures of those traits must, and therefore will, improve too, removing attenuation due to measurement error. For example, intelligence studies have mostly used proxies such as self-reported educational attainment, rather than the best measures, cognitive test batteries. Paradoxically, the drive to increase sample size has introduced its own drawbacks by weakening the measures used—self-reported educational attainment is widely available, IQ tests far less so. As a practical matter, large sample sizes have often been achieved by cobbling together many smaller studies using meta-analysis techniques (single large studies are expensive). This has more pernicious consequences than thousand-author journal papers. The measures used in each component study are not necessarily consistent, giving a heterogeneous collection of somewhat disparate measures. This attenuates the combined study, reducing its ability to explain variation through a worse measure, while simultaneously expanding it through more subjects. On balance, less is explained than otherwise could be with strong and consistent trait measures. However this issue will be addressed in the future, as part of the inexorable drive to explain as much trait variation as possible.

Other improved techniques—e.g. MTAG, in which multiple traits are combined simultaneously to increase explanatory power because they share an underlying genetic architecture—will also help, as will improved methods for combining smaller studies to reduce introduced bias and other problems. Combining all these improvements, and many others we can only anticipate, will push explained variation by SNPs for each trait up toward the highly substantial levels we already know are feasible from pedigree studies.

Before considering specific applications of knowledge about the genes underlying traits, two general principles are very important. These will gird the discussion to come. Both involve aggregation. When effects of a modest size are combined, this produces a much stronger effect in aggregate. Even quite weak measures can be combined to form an effective whole. An important example of this is the creation of effective cognitive tests by Alfred Binet in the early 1900s. Individual items in those tests have very modest predictive power. They are not even all that consistent individually, as the same person will get variant results retaking an item. Binet’s breakthrough (probably serendipitous) was to combine many such items into an overall measure that proves to be reliable in aggregate. This is analogous to tying sticks into a bundle. Individually the sticks are weak and easy to break. A large bundle is much stronger and harder to break. The same process underlies the resilience of averages, and are the key reason why we use them so much. Individual observations may contain large errors. Averaging them tends to cancel out the errors, giving a reliable overall measure. This is the best estimate of the underlying value if the observations are not biased.

There are two kinds of aggregation that will prove useful here: aggregation over many individuals, and aggregation over many traits. Suppose that we have a modest association between a set of genes and a trait such as educational attainment. Up to date we can explain perhaps 15% of the variance in educational attainment using genes alone, but the exact number doesn’t matter for these purposes.

Consider a large organization which has to deal with very many individuals. Let’s say several thousand. If the organization has genetic sequence data for each individual, they can predict the level of educational attainment, say in years completed, that individual would obtain. They could do this at birth, for example, or even at conception. Alternatively, at maturity. However because the association with known genes is modest, these predictions would be often be off target. Let us also suppose that the organization proposes to pay for the education of each individual. To budget for this, they need to know how far in years each is likely to go. They could simply guess this from general experience, but they happen to know that their individuals are rather a lot smarter than average, and suspect that more of them will go far than in most organizations. In aggregate, the result of their genetically-based prediction will be a fair approximation and a good basis for reasoning, because errors—some will be high, some low—will tend to cancel out when taken together. With enough individuals, even a very weak predictor can be used to advantage. This is similar to how casinos can still make money on the roulette wheel even against perfectly rational gamblers playing black versus red randomly: the slot with no colour gives them a slight but invincible edge which can be exploited at scale.

Filtering is another application of the same idea. Suppose you have a large collection of people, many millions, and must locate an individual with an IQ of at least 175, to work on very important matters. Such people are rare, and you may have to test many before you find one. You don’t have those IQ test results yet, but suppose you happen to have genetic information for each individual. Let’s stipulate that your genetic test only explains perhaps 13% of the variance in IQ using a full scale cognitive battery. Many of the individuals you pick out as 175 plus IQs will not be that smart, but one will almost certainly be. That is a much smaller set of people than your overall set, and it will be much cheaper and faster to administer cognitive tests to that smaller set than the whole population. Do not scorn tests with modest associations, they can still be very helpful.

The second kind of aggregation operates over many traits rather than many individuals. It can be applied to a single individual. If you predict a single trait for an individual, having most predictive validity is a drawback. The results will be noticeably weak, and may seem hardly worthwhile (as predictions). The initial feeling of fun and novelty could wear off quickly. However suppose you are able to make very many predictions or offer many pieces of advice to that individual based on a great number of traits. The overall effect will be aggregated, and it is possible to obtain a reliable level of usefulness. Systems can be built to do this, acting as a ubiquitous adviser over many domains and underlying traits. The key is to have a recognizable impact from the point of view of an individual. We will return to this concept below.

In what follows we will usually be talking about human subjects. However the same concepts apply, sometimes with modifications, to other organisms: animals (pets, livestock, conserved wildlife), bacteria and even viruses. Commercial opportunities exist for the application of these ideas in all those cases, some of which are already in practice, others will be novel in the years to come as genetic knowledge expands. A key difference is the extent to which experimental control is possible: with animals, bacteria and viruses, this is much more feasible than with humans, where many ethical constraints operate (though these may vary internationally and change over time). Where differences are important they will be called out.

We will start with areas in which genomics is currently applied commercially, then work through extensions expected to take place. In the simplest cases these are incremental (though none the less valuable) changes. Indeed, experience shows that most research and development is incremental. More fundamental changes will be discussed too, which are necessarily more speculative, but the temptation to indulge in flights of fancy has been kept in check. Only developments which are feasible in principle are discussed.

We will continue this series.